JS 的 AI 时代来了!

发布于2024-12-25 阅读(0)

发布于2024-12-25 阅读(0)

扫一扫,手机访问

JS-Torch 简介

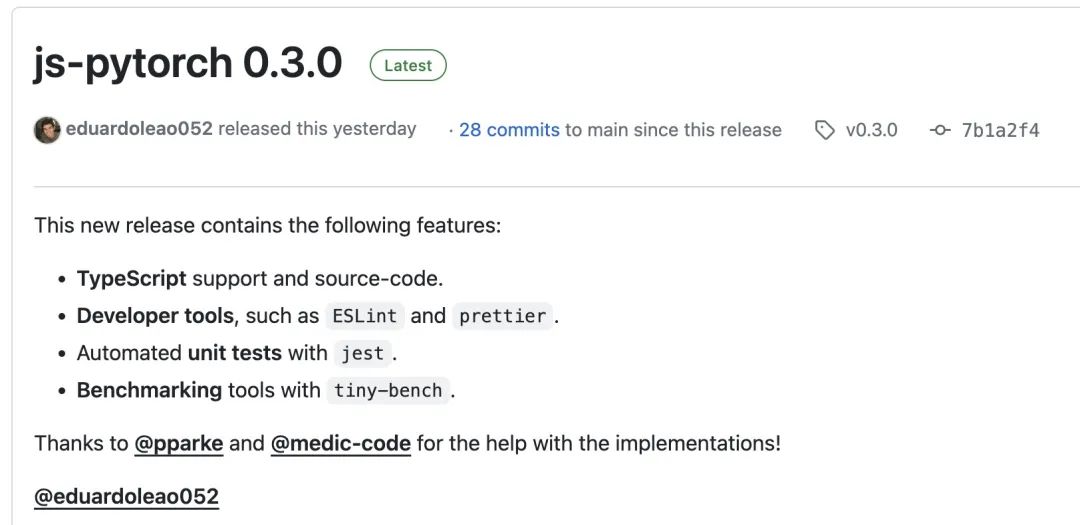

JS-Torch是一种深度学习JavaScript库,其语法与PyTorch非常相似。它包含一个功能齐全的张量对象(可与跟踪梯度),深度学习层和函数,以及一个自动微分引擎。JS-Torch适用于在JavaScript中进行深度学习研究,并提供了许多方便的工具和函数来加速深度学习开发。

图片

图片

PyTorch是一个开源的深度学习框架,由Meta的研究团队开发和维护。它提供了丰富的工具和库,用于构建和训练神经网络模型。PyTorch的设计理念是简单和灵活,易于使用,它的动态计算图特性使得模型构建更加直观和灵活,同时也提高了模型构建和调试的效率。PyTorch的动态计算图特性也使得其模型构建更加直观,便于调试和优化。此外,PyTorch还具有良好的可扩展性和运行效率,使得其在深度学习领域广受欢迎和应用。

你可以通过 npm 或 pnpm 来安装 js-pytorch:

npm install js-pytorchpnpm add js-pytorch

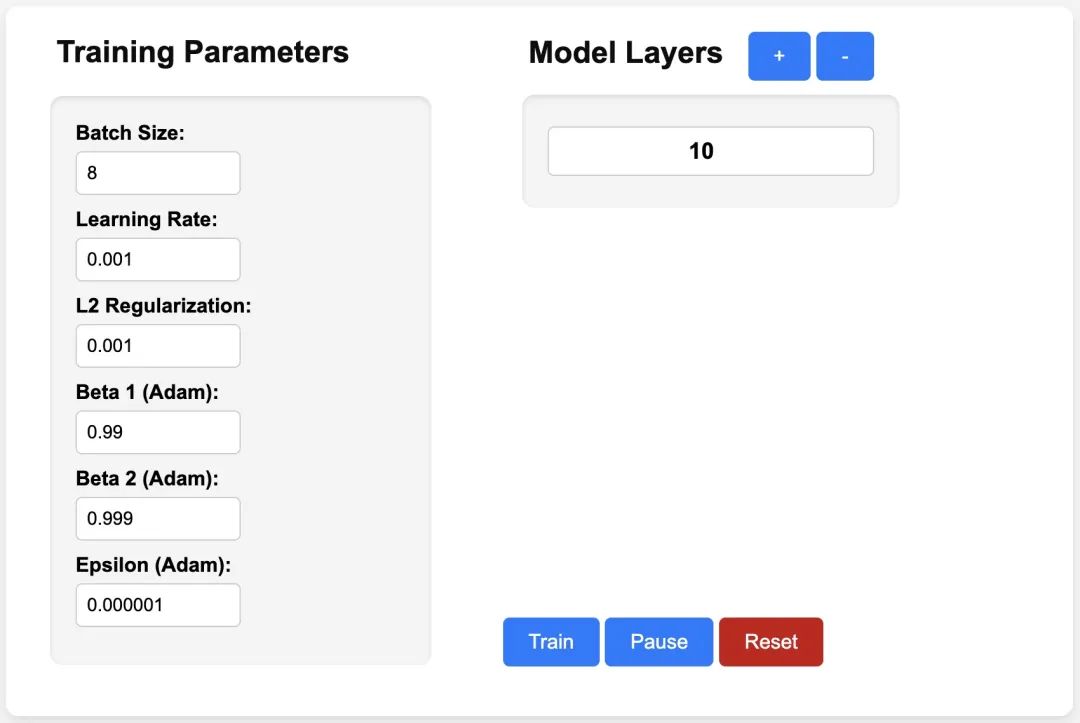

或者在线体验 js-pytorch 提供的 Demo[3]:

图片

图片

https://eduardoleao052.github.io/js-torch/assets/demo/demo.html

JS-Torch 已支持的功能

目前 JS-Torch 已经支持 Add、Subtract、Multiply、Divide 等张量操作,同时也支持Linear、MultiHeadSelfAttention、ReLU 和 LayerNorm 等常用的深度学习层。

Tensor Operations

- Add

- Subtract

- Multiply

- Divide

- Matrix Multiply

- Power

- Square Root

- Exponentiate

- Log

- Sum

- Mean

- Variance

- Transpose

- At

- MaskedFill

- Reshape

Deep Learning Layers

- nn.Linear

- nn.MultiHeadSelfAttention

- nn.FullyConnected

- nn.Block

- nn.Embedding

- nn.PositionalEmbedding

- nn.ReLU

- nn.Softmax

- nn.Dropout

- nn.LayerNorm

- nn.CrossEntropyLoss

JS-Torch 使用示例

Simple Autograd

import { torch } from "js-pytorch";// Instantiate Tensors:let x = torch.randn([8, 4, 5]);let w = torch.randn([8, 5, 4], (requires_grad = true));let b = torch.tensor([0.2, 0.5, 0.1, 0.0], (requires_grad = true));// Make calculations:let out = torch.matmul(x, w);out = torch.add(out, b);// Compute gradients on whole graph:out.backward();// Get gradients from specific Tensors:console.log(w.grad);console.log(b.grad);Complex Autograd (Transformer)

import { torch } from "js-pytorch";const nn = torch.nn;class Transformer extends nn.Module {constructor(vocab_size, hidden_size, n_timesteps, n_heads, p) {super();// Instantiate Transformer's Layers:this.embed = new nn.Embedding(vocab_size, hidden_size);this.pos_embed = new nn.PositionalEmbedding(n_timesteps, hidden_size);this.b1 = new nn.Block(hidden_size,hidden_size,n_heads,n_timesteps,(dropout_p = p));this.b2 = new nn.Block(hidden_size,hidden_size,n_heads,n_timesteps,(dropout_p = p));this.ln = new nn.LayerNorm(hidden_size);this.linear = new nn.Linear(hidden_size, vocab_size);}forward(x) {let z;z = torch.add(this.embed.forward(x), this.pos_embed.forward(x));z = this.b1.forward(z);z = this.b2.forward(z);z = this.ln.forward(z);z = this.linear.forward(z);return z;}}// Instantiate your custom nn.Module:const model = new Transformer(vocab_size,hidden_size,n_timesteps,n_heads,dropout_p);// Define loss function and optimizer:const loss_func = new nn.CrossEntropyLoss();const optimizer = new optim.Adam(model.parameters(), (lr = 5e-3), (reg = 0));// Instantiate sample input and output:let x = torch.randint(0, vocab_size, [batch_size, n_timesteps, 1]);let y = torch.randint(0, vocab_size, [batch_size, n_timesteps]);let loss;// Training Loop:for (let i = 0; i < 40; i++) {// Forward pass through the Transformer:let z = model.forward(x);// Get loss:loss = loss_func.forward(z, y);// Backpropagate the loss using torch.tensor's backward() method:loss.backward();// Update the weights:optimizer.step();// Reset the gradients to zero after each training step:optimizer.zero_grad();}有了 JS-Torch 之后,在 Node.js、Deno 等 JS Runtime 上跑 AI 应用的日子越来越近了。当然,JS-Torch 要推广起来,它还需要解决一个很重要的问题,即 GPU 加速。目前已有相关的讨论,如果你感兴趣的话,可以进一步阅读相关内容:GPU Support[4] 。

参考资料

[1]JS-Torch: https://github.com/eduardoleao052/js-torch

[2]PyTorch: https://pytorch.org/

[3]Demo: https://eduardoleao052.github.io/js-torch/assets/demo/demo.html

[4]GPU Support: https://github.com/eduardoleao052/js-torch/issues/1

产品推荐

-

售后无忧

立即购买>- DAEMON Tools Lite 10【序列号终身授权 + 中文版 + Win】

-

¥150.00

office旗舰店

-

售后无忧

立即购买>- DAEMON Tools Ultra 5【序列号终身授权 + 中文版 + Win】

-

¥198.00

office旗舰店

-

售后无忧

立即购买>- DAEMON Tools Pro 8【序列号终身授权 + 中文版 + Win】

-

¥189.00

office旗舰店

-

售后无忧

立即购买>- CorelDRAW X8 简体中文【标准版 + Win】

-

¥1788.00

office旗舰店

-

正版软件

正版软件

- 消息称微软抽调 Teams 等部门员工组建专门团队,全力推进 Copilot

- 本站4月4日消息,国外媒体BusinessInsider披露了一份微软内部备忘录,透露微软内部正构建一支专门团队,负责推进Copilot及其相关产品的后续开发。该备忘录由微软公司人工智能工作副总裁里德·斯帕塔罗(JaredSpataro)撰写,表示计划调整现有Teams部门成员工,抽调员工加入这支新的Copilot团队之外,还会进行适当的裁员。尽管备忘录中没有提及具体的裁员人数和转岗人数,但显然微软的开发重心已经放在Copilot上,抽调其他部门成员推进该项目的发展。微软公关部主管弗兰克・肖(FrankS

- 4分钟前 微软 AI Copilot 0

-

正版软件

正版软件

- 起亚全新K4轿车亮相纽约车展,预告掀背版车型即将登场

- 3月28日消息,起亚汽车在2024纽约车展上展示了全新一代K4轿车,并预告了即将推出的K4+Hatchback两厢掀背版车型。这款备受期待的新车将在美国市场推出,为消费者提供更多选择。小编了解到,全新的K4紧凑型轿车已经取代了之前的福瑞迪(Forte)车型,展现出全新的外观设计风格。这款轿车在动力方面表现出色,标配147匹马力2.0L自然吸气四缸发动机,同时提供选配190匹马力1.6T涡轮增压四缸发动机,满足不同消费者的驾驶需求。K4通过提供超过29项ADAS驾驶辅助功能,完善了其强大的动力性能。这些功能

- 19分钟前 起亚 0

-

正版软件

正版软件

- 伊克罗德信息与墨奇科技战略合作,共塑生成式AI未来

- 现今,数字化浪潮全球席卷,人工智能技术以其强大的潜力和广泛的应用前景,正引领着新一轮的科技革命。近日,伊克罗德信息与墨奇科技正式宣布双方达成战略合作,双方将围绕生成式AI技术展开,发挥各自的技术优势和资源优势,利用大语言模型LLM、向量数据库构建生成式AI应用解决方案,通过检索增强生成RAG提升性能并降低模型成本,打造企业AI新范式,为企业带来更加智能化、便捷的服务体验。优势互补,共探生成式AI的无限可能墨奇科技副总裁孟卓飞强调,当前我们正面临着生成式AI这一前所未有的时代级机会,它不仅改变着每个人的工作

- 34分钟前 生成式AI 伊克罗德信息 墨奇科技 0

-

正版软件

正版软件

- 争议升级:西数WDDA硬盘警告开机3年后换新

- 6月15日消息,近日有使用群晖NAS(网络附加存储)的用户反馈称,在他们的设备上,即使硬盘运行正常,也会出现需要更换硬盘的警告。根据用户的描述,群晖的操作系统管理界面(DiskStationManager,简称DSM)显示出警告标记,提示用户考虑更换硬盘,原因是硬盘已经使用了相当长的时间。群晖官方回应称,这些警告信息并非来自群晖自身,而是由硬盘制造商西部数据(WesternDigital,简称WD)提供的。据小编了解,受影响的一些硬盘产品包括WDRedPro、WDRedPlus和WDPurple,这些产品

- 49分钟前 警告 西数硬盘 换新 0

-

正版软件

正版软件

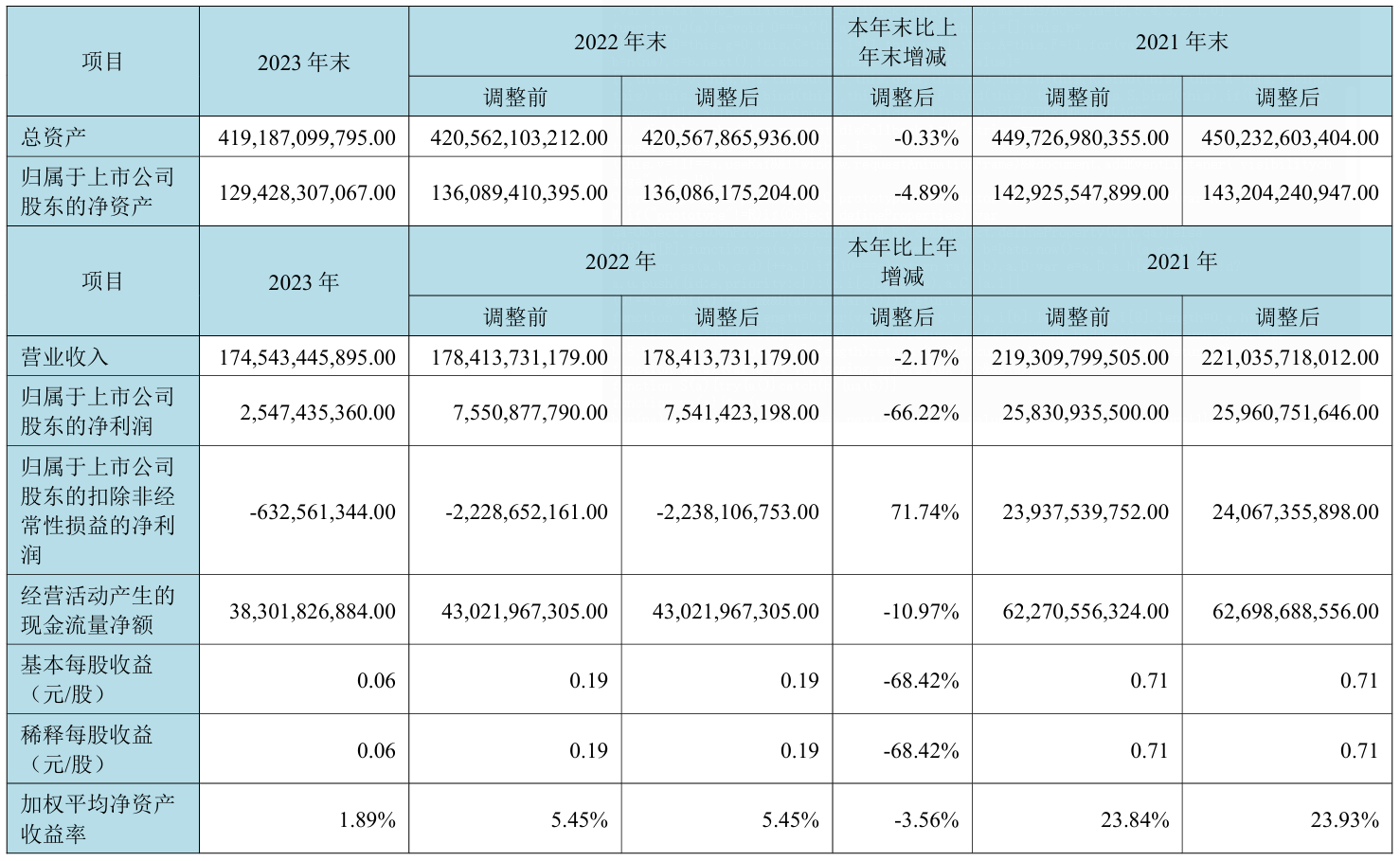

- 京东方 2023 年营收约 1745.43 亿元同比降 2.17%,净利润约 25.47 亿元

- 本站4月1日消息,京东方今晚发布2023年度报告,数据显示该公司2023年营收约1745.43亿元,同比减少2.17%;归属上市公司股东净利润约25.47亿元,同比减少了66.22%;基本每股收益0.06元,同比减少68.42%。2023年1至6月份,京东方A的营业收入构成为:显示器件业务占比84.66%,物联网创新业务占比21.72%,智慧健康服务占比1.69%,MLED业务占比0.57%,传感器及解决方案业务占比0.23%。本站附京东方详细报告情况如下图:京东方董事长陈炎顺表示,公司2023年全年LC

- 1小时前 11:40 京东方 0